Every now and then, an eCommerce client seeks out Impression’s technical SEO services for help with a more unusual issue, something that goes beyond the remit of standard SEO auditing.

Not only does this contribute to the “no-two-days-are-the-same” agency ethos, it also allows for more interesting topics to be shared on our blog. Sharing is caring, after all.

Our co-founder, Aaron Dicks, did exactly this back in February 2016 when he and I solved the query of creating custom canonical tags via Magento for a large online retailer of ours. He rounded this off with a detailed write-up on our last year.

Fast forward to 2017 and an equally as interesting topic has again arisen, this time from another national eCommerce brand; how to use pagination and AJAX for a more SEO-friendly approach to infinite scroll.

This particular client implemented infinite scroll throughout the entirety of their category pages, and at first glance, why not? Infinite scroll can be great for usability as it avoids the user scrolling through endless paginated component pages which may serve to disrupt their journey.

However, the problem in this instance was not how the user was perceiving the infinite scroll, rather, how Googlebot was perceiving it.

Why JavaScript functionality demands a solid web build first

Google has claimed to understand JavaScript for many years now. Despite this, several case studies still emerge that unequivocally question this sentiment.

Most recently, Distilled published an article that hypothesised the negative effects of JavaScript-based internal linking. Sure enough, by changing links to simple HTML, they were able to achieve significant organic uplift for that particular eCommerce client.

In the context of infinite scroll itself, Google has even published some documentation on how to best integrate the functionality into your site for better Googlebot interpretation.

Issues regarding JavaScript may become exacerbated even further if your server response time is slow as Googlebot may not have the resource to wait for the second request.

With this in mind, we were keen to follow suit JavaScript SEO best practices by reconfiguring and optimising our JavaScript where possible for this particular eCommerce client.

What was our objective?

Put simply, to improve crawl efficiency and the distribution of link equity.

Even before finding errors regarding infinite scroll, our client was an avid user of “nofollow” tags across their internal linking structures – 14K nofollow links, to be precise.

By simply removing these tags thus changing them to “follow”, we were immediately able to visualise more internal link equity flowing through the site.

Once we worked with their developer to solve the “nofollow” issue, we came across further crawl efficiency problems with OnPage.org reporting 4.5K pages with no internal links.

With everything else sufficiently optimised and nowhere else to go, we decided to focus our efforts to the world of JavaScript – was AJAX the root cause of some pages not receiving internal link equity?

How to turn JavaScript on and off to determine how crawlers “see” your site

By turning JavaScript off at page-level, we’re able to visualise what crawlers can and cannot “see”. From there, if any page content does significantly change, including the disappearance of pagination and internal links, then we can safely presume that a web crawler, i.e. Googlebot, is unable to crawl and pass link equity across these.

To turn off JavaScript via Google Chrome, you need to access the Element Panel. This is accessed by right-clicking anywhere on a page and selecting Inspect”.

Once the Element Panel opens, head to the top-right of the window and select the cog ![]() for Settings.

for Settings.

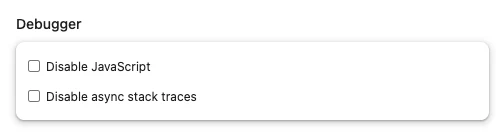

Once the Settings view has opened, you need to find Debugger and click the checkbox next to Disable JavaScript. This is illustrated in the screenshot below:

Once the checkbox has been ticked, you need to reload your page to see how it behaves with JavaScript disabled.

In the instance of our eCommerce client, turning JavaScript off showed there was no pagination present. This immediately highlighted how Googlebot would not be able to progress through further pages to crawl the additional products featured.

(Remember, this is isolated to one category page alone; multiplying the issue to all their category pages results in several internal links not being visible to Googlebot.)

Uncrawlable links mean diluted link equity which, in turn, means weakened crawl efficiency – sad times for Impression SEOs and our clients alike! ?

At this juncture, we needed to work on implementing an SEO-friendly infinite scroll solution. Here’s how we did it.

Step 1 – we implemented HTML pagination

Our first step involved implementing conventional HTML pagination, including the logic to build the component pages.

Once the pagination was built, we tied all the component pages together using Google’s rel=”next” and rel=”prev” directive to clearly outline the relationship between all paginated content. Further documentation on rel=”next” and rel=”prev” and Google’s general recommendations on pagination can be found here.

As a final piece of house cleaning, we also added self-referential canonical tags to all component pages.

Step 2 – Implement pushState

Once we implemented the HTML pagination, we “hid” this with JavaScript and re-enabled the infinite scroll.

From there, we implemented a “listen” event to where the user would scroll to the end of (what would effectively be) page 1.

At this point, page 2’s products were pulled in while using HTML5 to push the new paginated URLs to the browser address bar. To determine whether this has been correctly installed, you will be able to visibly see the URLs change in your browser window, i.e. /category-page/page/2, /category-page/page/3.

To round-off the implementation, we also needed to consider tracking this behaviour via Google Analytics. Here, we used GA push a virtual pageview to the paginated URLs, as below:

ga('send', 'pageview', location.pathname+”/page/2/”);

The solution

John Mueller assembled a useful example of how all this fits together here: http://scrollsample.appspot.com/items

(Scroll down the page to see the URL visibly change and switch JavaScript off to see the HTML pagination working in the background.)

What’s left is a fluid user experience and an implementation that’s SEO-friendly, allowing all your content to be crawled regardless of where it lies within the infinite scroll.

If you’re a business owner whose site uses slightly more advanced functionality and you’re wondering how this impacts your SEO, feel free to get in touch with Impression’s technical SEO team today.